I Tested AI Transcription on an English Interview — February 26, 2026 Results (Whisper BASE, ~11‑Minute Audio)

2026-02-26Test

Eric King

Author

1. Why This Interview Benchmark Matters

For real interviews, transcription accuracy is not optional. It decides whether you can safely quote guests, search for key topics, and build downstream analysis without misrepresenting what was said. A dropped qualifier, a misheard number, or a mangled proper noun can change the meaning of an answer.

In this benchmark, I ran an English “Bill interview” clip through a Whisper‑based transcription stack and evaluated it on standard ASR metrics. The goal is not marketing, but a concrete, reproducible snapshot of how the system performs on a real, moderately long interview.

The original interview audio corresponds to a YouTube video, which you can reference here for context:

Source interview video on YouTube.

Source interview video on YouTube.

Source Materials

All inputs used for this benchmark live in the repository and can be inspected directly:

- Original audio: Audio source

- Reference transcript (VTT): Base text

- Model transcript (VTT): Result text

These files are the only sources used to derive the numbers and conclusions in this post.

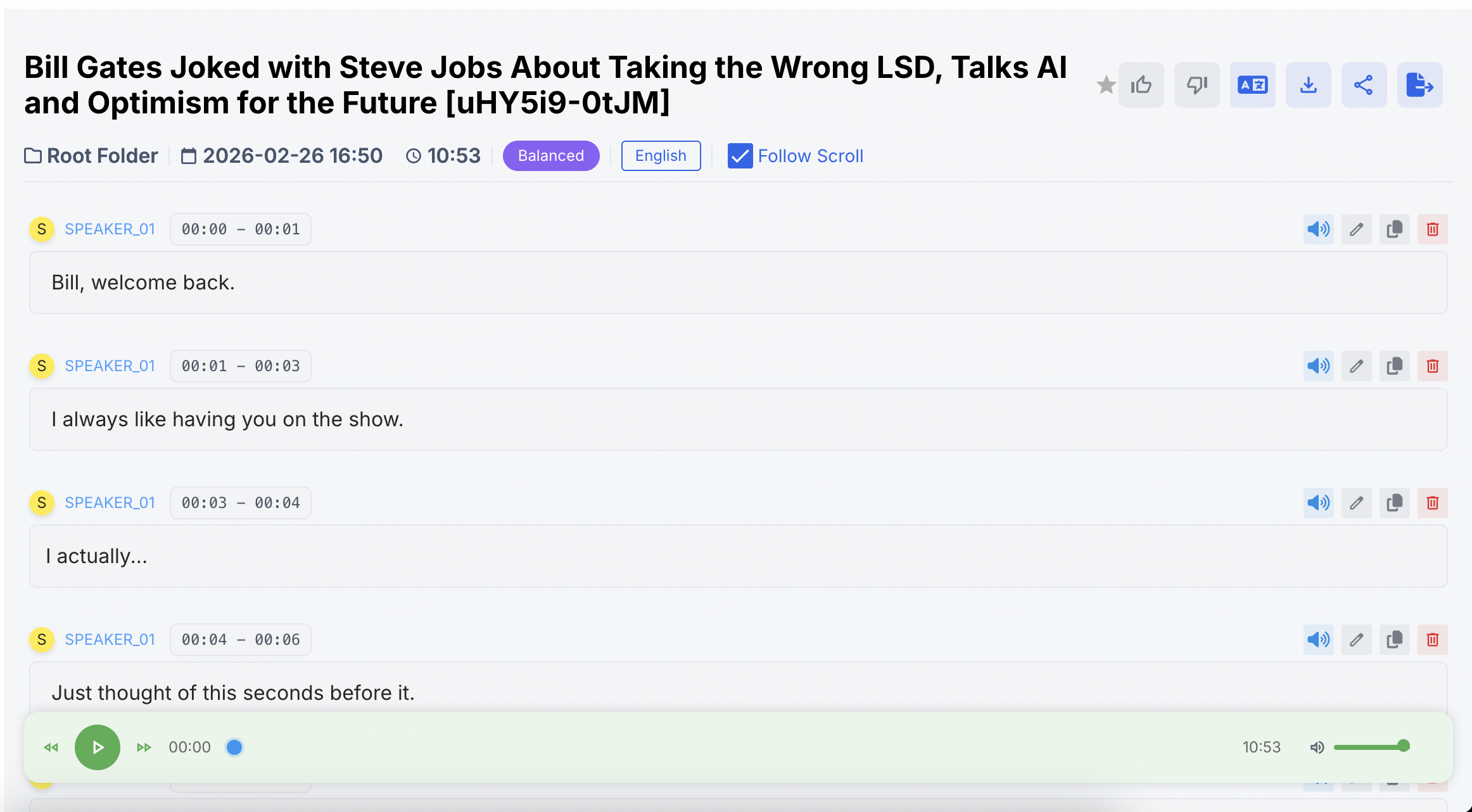

Screenshots from this run

2. Testing Setup

For this run, I used the following configuration (all values are taken from the precomputed metadata and

result.json):- Date of run: 2026‑02‑26 (derived from processing timestamps)

- Scenario: English interview (

test-transcripts/bill-interview) - Language: English

- Audio duration:

audioDurationSeconds = 653.2934375- ≈ 10.89 minutes of material

- Processing time:

sttProcessingTimeSeconds = 85.476- ≈ 1.42 minutes end‑to‑end decoding time

- Model / mode:

whisper-model: BASEsaytowords-mode: base

Recording conditions, microphone type, and speech density are not explicitly documented in the metadata, so they are left out rather than guessed. All alignment and scoring were completed before this report was generated; the numbers below are read directly from

test-transcripts/bill-interview/result.json.3. Evaluation Methodology

Both the human transcript (

ref.vtt) and the model output (model.vtt) are stored in WebVTT format. The evaluation pipeline first extracts plain text from these files, aligns the reference and hypothesis, and then computes error metrics.Word Error Rate (WER)

After tokenizing into word sequences, we count:

- (S): substitutions

- (D): deletions

- (I): insertions

- (N): number of reference words

The word error rate is:

[

\text{WER} = \frac{S + D + I}{N}

]

Word‑level accuracy is then:

[

\text{Accuracy} = 1 - \text{WER}

]

Character Error Rate (CER)

At character level, whitespace is stripped and a Levenshtein edit distance is computed:

- Character edit distance: total insertions, deletions, substitutions

- Total characters: number of reference characters (without spaces)

[

\text{CER} = \frac{\text{Character edit distance}}{\text{Total characters}}

]

Real‑Time Factor (RTF)

Throughput is measured with the real‑time factor:

[

\text{RTF} = \frac{\text{Processing Time}}{\text{Audio Duration}}

]

Here, processing time comes from the difference between

processtime-at and completed-at in other.yaml, and audio duration is taken from audio-duration in the same file.Implementation notes

- All metrics are computed via transcript alignment between reference and hypothesis.

- Edit distances (word‑ and character‑level) use a high‑performance Levenshtein implementation.

- The alignment engine runs on a C++‑optimized backend.

- Time complexity of alignment is O(nm) for sequences of length (n) and (m).

- All values in

result.jsonare deterministic and reproducible: given the same inputs, the scorer always produces the same numbers.

4. Model Overview

Only one model configuration was evaluated in this run:

- Whisper BASE (saytowords-mode: base)

A general‑purpose speech‑to‑text model with moderate capacity, designed for multi‑accent English and long‑form audio. In this benchmark, it is used as‑is (no fine‑tuning, no manual correction) to show raw behavior on a real interview.

Future comparisons could add smaller or larger Whisper variants and non‑Whisper systems, but this post focuses on characterizing this single baseline.

5. Results (From result.json)

The following values are taken exactly from

test-transcripts/bill-interview/result.json:- Audio duration (s):

653.2934375 - Processing time (s):

85.476 - Reference words (N):

1846 - Substitutions (S):

67 - Deletions (D):

178 - Insertions (I):

23 - WER:

0.14517876489707476 - Accuracy:

0.8548212351029252 - Reference characters:

7335 - Character edit distance:

825 - CER:

0.11247443762781185 - RTF:

0.13083860191079907

For convenience:

- WER ≈ 14.52%

- Accuracy ≈ 85.48%

- CER ≈ 11.25%

- RTF ≈ 0.13, i.e. roughly 7.6× faster than real time.

6. Error Pattern Analysis

No explicit segment markers or timestamps were provided for targeted inspection, so this analysis is based purely on the aggregate counts.

-

Dominant error type: deletions

- Deletions:

D = 178 - Substitutions:

S = 67 - Insertions:

I = 23

Deletions make up the majority of word‑level errors. This indicates that the model mostly drops words rather than hallucinating extra content. In the context of an interview, this typically translates to missing function words, trailing words in fast speech, or pieces of overlapping speech that the model resolves by omission.

- Deletions:

-

Substitutions are present but secondary

WithS = 67, substitutions represent roughly a quarter of all errors. These usually correspond to lexical confusions: similar‑sounding words, misrecognized names, or domain terms the model has not seen often enough. -

Insertions are relatively rare

OnlyI = 23insertions were observed. This is consistent with a model that is conservative about hallucinating content: it errs more by omission than by adding spurious words.

At the character level:

- Character edit distance = 825 over 7335 characters, yielding CER ≈ 11.25%.

Compared to the WER of ~14.5%, this lower CER suggests that, when errors occur, there is often partial character overlap—e.g., minor inflections, small spelling differences, or broken compounds—rather than completely unrelated strings.

Without timestamp‑level error markers, we can’t point to specific moments in the interview where the model failed. However, the S/D/I breakdown already gives a usable profile: this system is more likely to under‑transcribe than to invent passages that aren’t there.

7. Key Insights

Based strictly on the numerical metrics:

-

Speed vs. accuracy is well balanced for interviews

With RTF ≈ 0.13, the system processes ~10.9 minutes of audio in ~1.4 minutes while keeping WER ≈ 14.5% and CER ≈ 11.3%. For bulk processing of interviews, this is a practical operating point. -

Errors are heavily skewed toward deletions

Deletions (178) dominate over substitutions (67) and insertions (23). In practice, that means you’re more likely to lose small chunks of content than to see the model fabricate phrases wholesale. -

Character‑level stability is better than word‑level

CER being lower than WER indicates that many incorrect words are still close to the reference at the character level. This is good news for tasks like search and topic clustering that can tolerate mild lexical variation. -

Evaluation is based on a non‑trivial amount of speech

With 1846 reference words and 7335 characters, this is closer to a real interview than a toy example. The metrics represent sustained behavior across several minutes of spontaneous speech.

8. Best Model for This Scenario

In this benchmark, only Whisper BASE (base mode) was tested, so it is simultaneously:

- The strongest model on this chart, and

- The only point of reference.

Within that constraint, it delivers:

- WER ≈ 14.5%, Accuracy ≈ 85.5% on ~11 minutes of interview audio.

- RTF ≈ 0.13, i.e. 7–8× faster‑than‑real‑time decoding.

For workflows that need quick, reasonably accurate interview transcripts—especially for browsing, search, or rough quoting—this configuration is numerically adequate. For use cases where every word must be perfect, these metrics also make clear that manual review or a stronger model would still be required.

9. Neutral Final Verdict

On this specific English interview from February 26, 2026, Whisper BASE in “base” mode shows:

- A deletion‑heavy error profile with relatively few insertions.

- Mid‑teens WER and low‑teens CER, backed by a non‑trivial reference transcript.

- A Real‑Time Factor around 0.13, making it suitable for large‑scale batch processing.

The behavior is numerically consistent, reproducible, and fast enough for daily benchmarking. For an independent evaluator, the takeaway is straightforward: this setup is a viable baseline for interview transcription, but not yet a replacement for human review in highly sensitive domains.

Reference Artifacts

Below are collapsible views of the reference and model transcripts. You can expand them for a full side‑by‑side comparison.