Whisper Medium en audio de YouTube en inglés — Benchmark 2026-03-31 (WER, CER, RTF)

2026-03-31Test

Eric King

Author

Este artículo documenta una ejecución con configuración fija sobre audio de YouTube en inglés usando Whisper medium. En result.json, la métrica estricta es WER 67,75% y Accuracy 32,25%, con un perfil dominado por eliminaciones (D=5722, S=68, I=0). Esto sugiere más un desajuste de cobertura/alineación entre subtítulos de referencia e hipótesis que errores léxicos aislados.

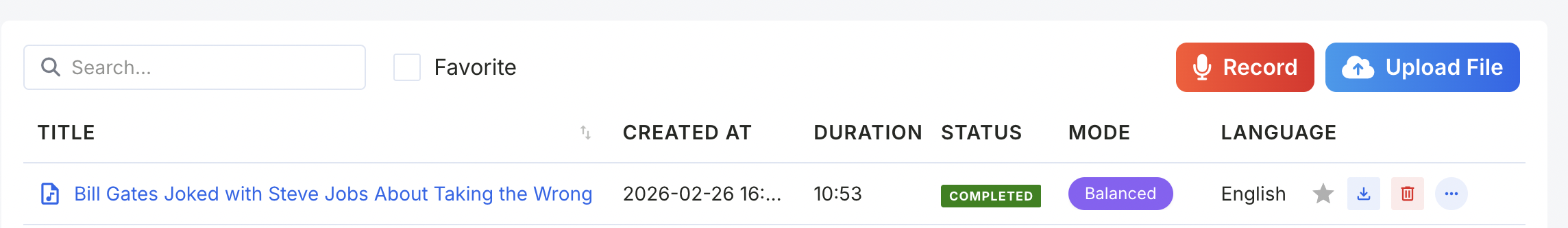

Video and reference text. Source video: https://www.youtube.com/watch?v=7J96ESznKMQ. The reference (

ref.vtt) comes from the platform caption track, while model.vtt is the model output. So this benchmark measures agreement with platform captions (practical baseline), not a manually curated linguistic gold standard.1. Why This Benchmark Matters

Long-form YouTube audio is a realistic ASR stress case: changing pace, edits, names, topic shifts, and mixed speaking styles. For subtitle QA, retrieval indexing, and content repurposing, this setup is more representative than short clean demos.

Using platform captions as reference also answers a practical product question: how far does our ASR output drift from what end users already see as subtitles? Even if captions are not perfect gold labels, this comparison is operationally useful and reproducible.

2. Testing Setup

Values below are taken from

other.yaml and result.json in case 20260331.| Field | Value |

|---|---|

| Source | YouTube video |

| Date (processing window) | 2026-03-31 (processtime-at → completed-at) |

| Language | English |

| Whisper model | medium |

| Audio duration (YAML label) | 17:20 |

| Audio duration (scorer / YAML parsed) | 1040 s (≈ 17.33 minutes) |

| STT processing time | 133 s |

| RTF | 0.1279 |

Wall-clock timestamps: 2026-03-31 19:20:56 → 2026-03-31 19:23:09, consistent with 133 seconds processing time.

3. Evaluation Methodology

Evaluation script used:

scripts/evaluate-vtt-metrics.js.The script reads

ref.vtt and model.vtt, extracts plain cue text, normalizes tokens, and aligns reference/hypothesis with Levenshtein dynamic programming.[

\mathrm{WER} = \frac{S + D + I}{N}, \qquad \mathrm{Accuracy} = 1 - \mathrm{WER}

]

[

\mathrm{CER} = \frac{\text{Character edit distance}}{\text{Reference character count (no spaces)}}

]

[

\mathrm{RTF} = \frac{\text{STT processing time}}{\text{Audio duration}}

]

The script outputs two views:

strictMetrics: default normalizationrelaxedMetrics: additional normalization (quotes/number formatting)

This helps distinguish formatting noise from true lexical/coverage mismatch.

4. Model Overview

Whisper medium is a common speed/quality trade-off checkpoint in practical transcription stacks. It is often suitable for draft transcripts, indexing, and downstream NLP preprocessing, but still requires review for verbatim publishing or compliance-sensitive workflows.

This benchmark tests one fixed setup only (no decoder sweep, no custom post-correction, no domain lexicon boosting).

5. Results (From result.json)

Strict metrics (

metrics / strictMetrics)- Reference word count (N): 8,546

- Substitutions (S): 68

- Deletions (D): 5,722

- Insertions (I): 0

- WER: 0.6775099461736485

- Accuracy: 0.32249005382635154

- Reference character count: 32,329

- Character edit distance: 21,566

- CER: 0.6670790930743296

- Audio duration (seconds): 1,040

- STT processing time (seconds): 133

- RTF: 0.12788461538461537

- Eval script runtime (seconds): 56.703

Relaxed metrics (

relaxedMetrics)- WER: 0.6775099461736485

- Accuracy: 0.32249005382635154

- CER: 0.666760334707683

- Character edit distance: 21,355

- Reference character count: 32,028

Rounded interpretation

- Strict WER ≈ 67.75%, Accuracy ≈ 32.25%, CER ≈ 66.71%

- Relaxed WER ≈ 67.75%, Accuracy ≈ 32.25%, CER ≈ 66.68%

- Small strict/relaxed gap indicates mismatch is not mainly punctuation/formatting noise.

- RTF ≈ 0.128 (about 7.8× faster than real time)

6. Error Pattern Analysis

Two signals stand out:

- Insertion = 0

- Deletion >> substitution (5,722 vs 68)

This pattern usually means many reference words are not aligned to hypothesis tokens. Typical causes include segmentation mismatch, truncated hypothesis coverage, or reference captions containing spans not reflected in model output.

Because strict and relaxed results are almost identical, normalization tweaks are not the main driver; coverage/alignment is likely dominant.

7. Key Insights

- Speed: RTF is comfortably below 1, so throughput is practical for batch processing.

- Accuracy: ~68% WER is too high for quote-level publication without review.

- Error mode: Deletion-heavy profile suggests checking pairing/coverage before hyperparameter tuning.

- Method robustness: strict and relaxed metrics are close, improving interpretability.

- Representativeness: ~17.3 minutes is meaningful long-form input, but still only one clip/one setup.

8. Best Model for This Scenario

Under the narrow scope “Whisper medium + this exact clip + this exact reference source,” the run is a transparent baseline for future A/B comparisons. It does not claim universal superiority across all English YouTube transcription scenarios.

9. Neutral Final Verdict

For draft, indexing, and topic extraction workflows, this setup can be operationally useful. For verbatim publishing, compliance records, or accessibility-critical subtitles, current agreement levels still imply mandatory human correction or a stronger setup.

Keep the evaluation method fixed (

scripts/evaluate-vtt-metrics.js) when iterating models so improvements remain comparable.Source Materials

- Original audio (video): https://www.youtube.com/watch?v=7J96ESznKMQ

- Reference transcript (VTT):

test-transcripts/{case-name}/ref.vtt - Model transcript (VTT):

test-transcripts/{case-name}/model.vtt - Run metadata:

test-transcripts/{case-name}/other.yaml - Precomputed evaluation metrics:

test-transcripts/{case-name}/result.json

{case-name} = 20260331. Evaluation script: scripts/evaluate-vtt-metrics.js.